推理您的微调模型

微调完成后,您可以通过引用访问新模型,这里使用ONNX Runtime GenAI实现。

安装ORT GenAI SDK

注意事项 - 如果您还没有安装CUDA 12.1,请先安装。如果您不知道如何安装,请阅读此指南 https://developer.nvidia.com/cuda-12-1-0-download-archive

安装完CUDA后,请安装带有CUDA的onnxruntime genai sdk

pip install numpy -U

pip install onnxruntime-genai-cuda --pre --index-url=https://aiinfra.pkgs.visualstudio.com/PublicPackages/_packaging/onnxruntime-genai/pypi/simple/

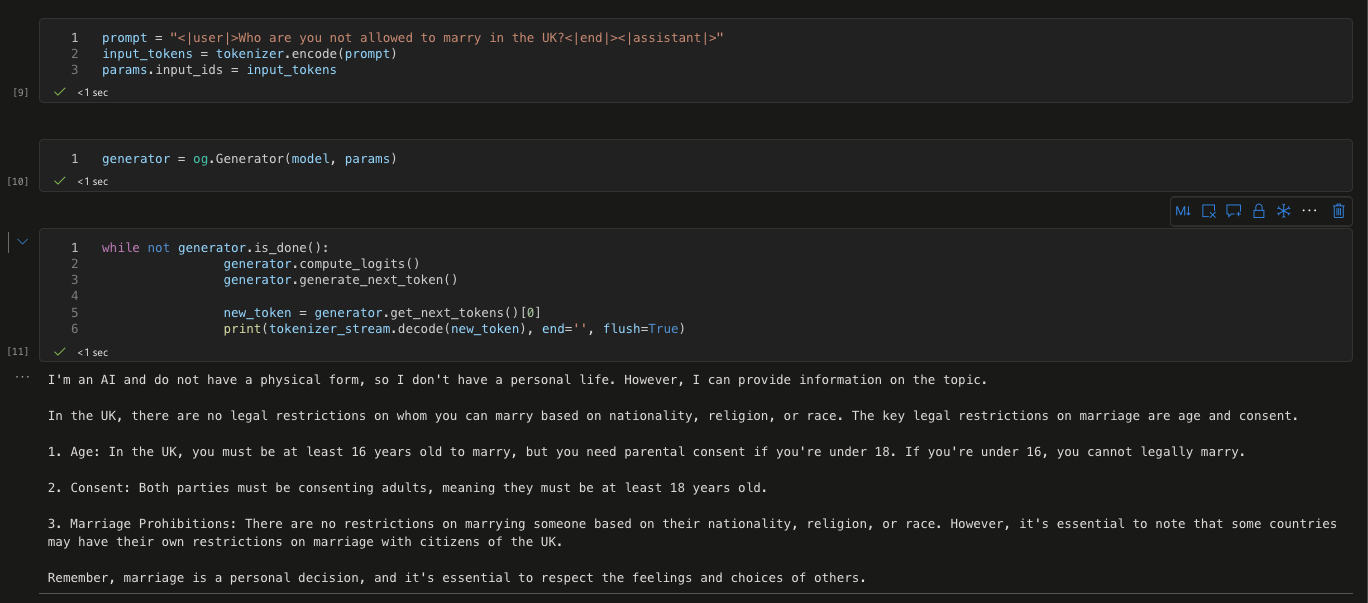

推理模型

import onnxruntime_genai as og

model = og.Model('这里替换成您的onnx模型文件夹位置')

tokenizer = og.Tokenizer(model)

tokenizer_stream = tokenizer.create_stream()

search_options = {"max_length": 1024,"temperature":0.3}

params = og.GeneratorParams(model)

params.try_use_cuda_graph_with_max_batch_size(1)

params.set_search_options(**search_options)

prompt = "prompt = "<|user|>在英国,你被禁止与谁结婚?<|end|><|assistant|>""

input_tokens = tokenizer.encode(prompt)

params.input_ids = input_tokens

generator = og.Generator(model, params)

while not generator.is_done():

generator.compute_logits()

generator.generate_next_token()

new_token = generator.get_next_tokens()[0]

print(tokenizer_stream.decode(new_token), end='', flush=True)

测试您的结果